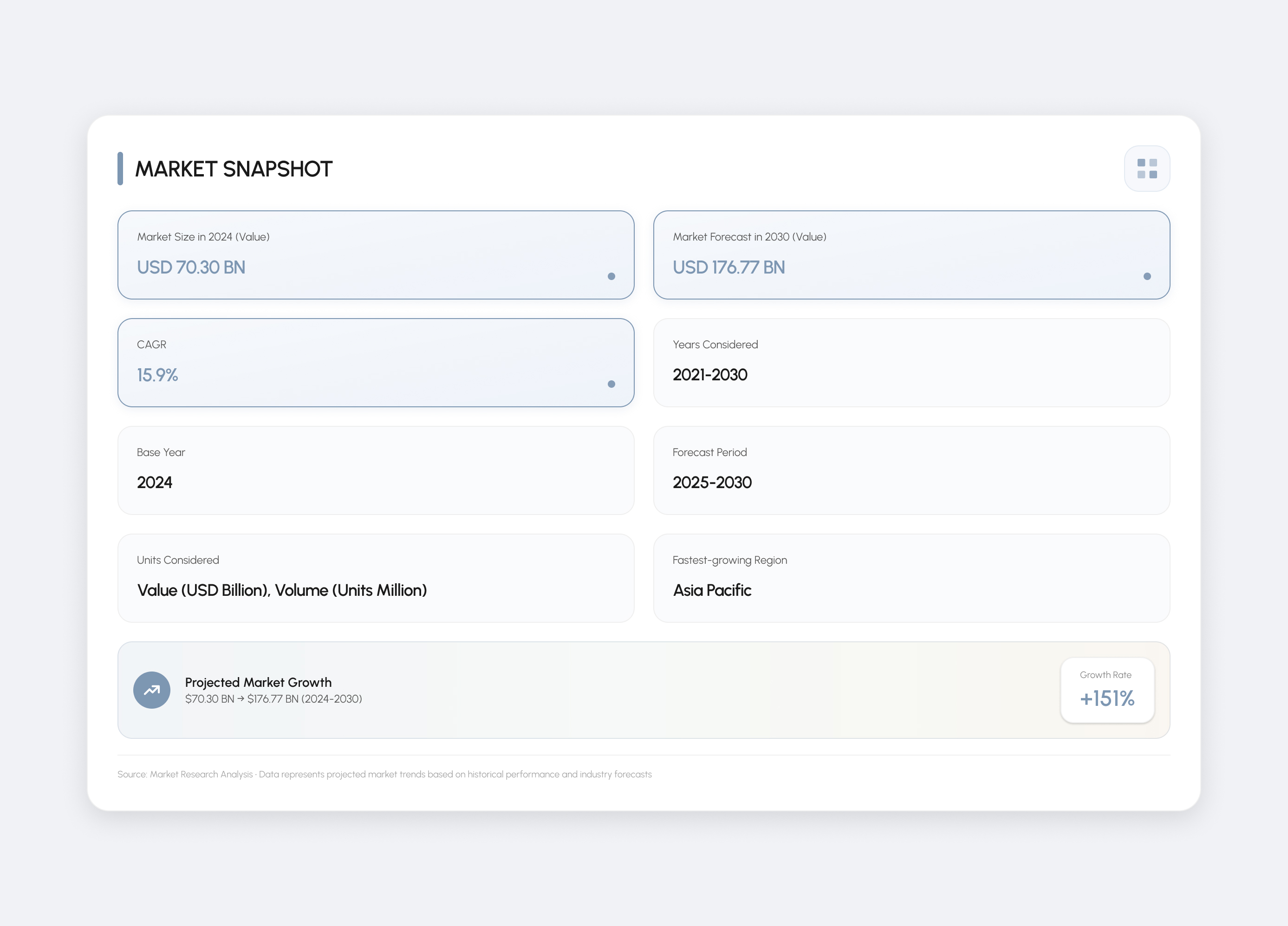

ACUITY • AI Health Wearable • 2026

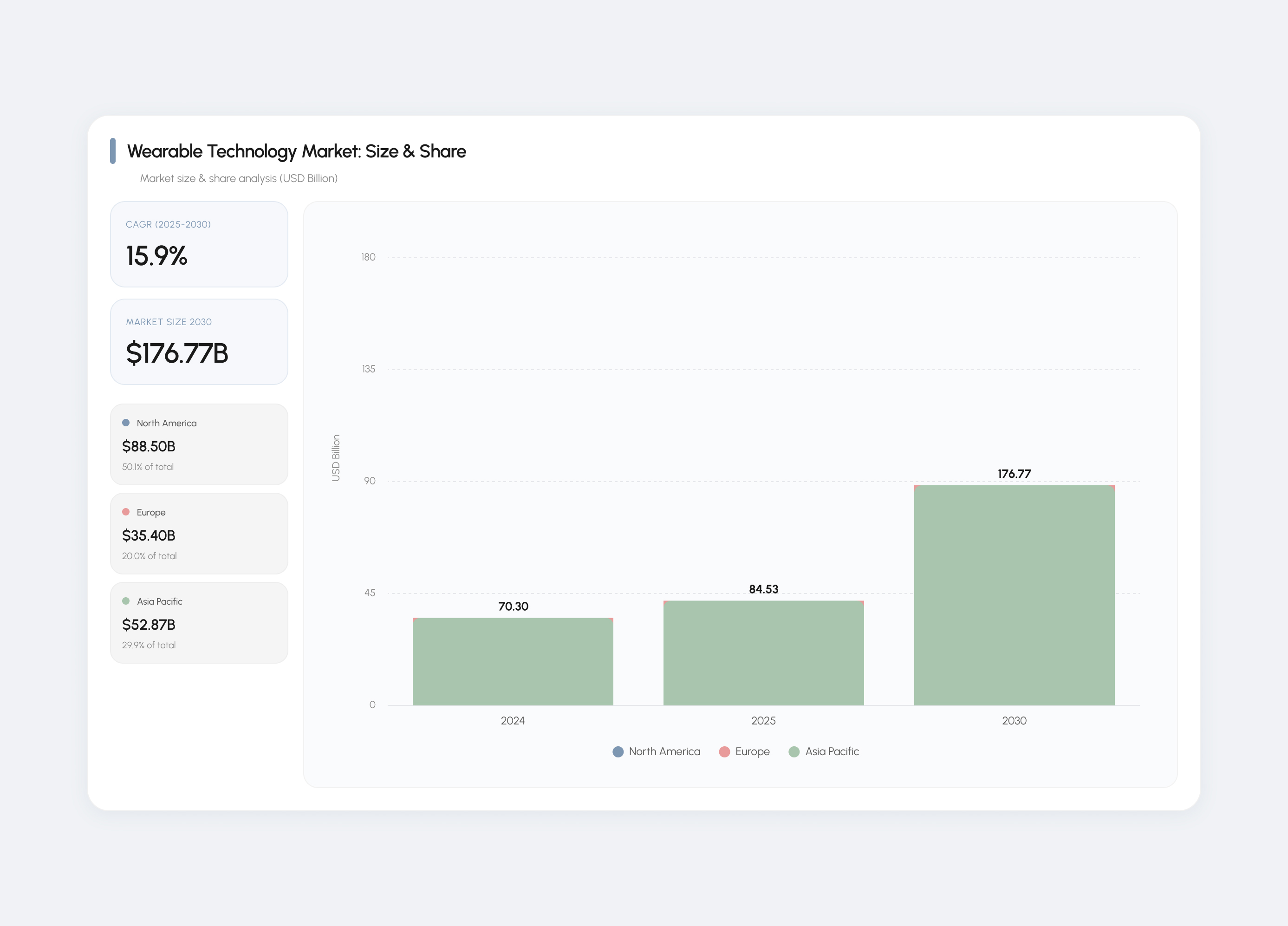

Helping people recognize early cardiovascular warning signs before a crisis.

Overview

Helping People Recognize Early Cardiovascular Warning Signs Before a Crisis

ACUITY is an AI-powered wearable system that detects early cardiovascular risk by comparing real-time biometric data against a user's personal baseline. It is designed for individuals experiencing ambiguous symptoms who lack clear guidance on when to act. The system translates physiological changes into clear risk states and a single recommended action. Developed in a 24-hour hackathon, the project focuses on decision-making clarity rather than data tracking.

The Problem

The Most Dangerous Moment in Healthcare Is the Uncertainty Before the Emergency

A heart attack survivor delayed seeking care because they assumed their symptoms were stress. The signals were present, but there was no clear way to interpret them or act in time.

Early cardiovascular symptoms are often ambiguous, leaving people unsure whether to act or wait. Existing wearables measure health data, but do not translate what those changes mean in real time. As a result, users are left making high-stakes decisions without clear guidance, often delaying care when timing is critical.

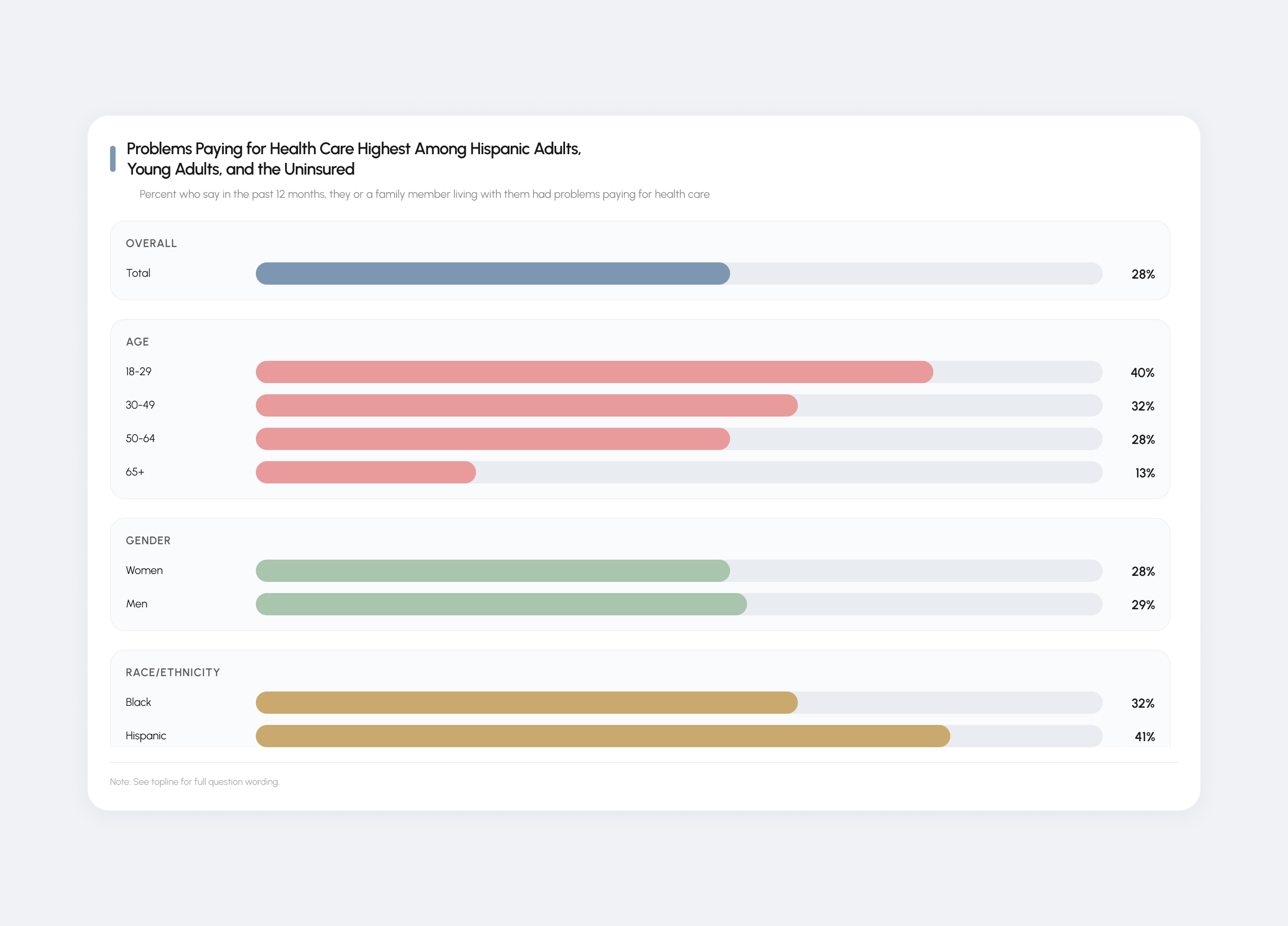

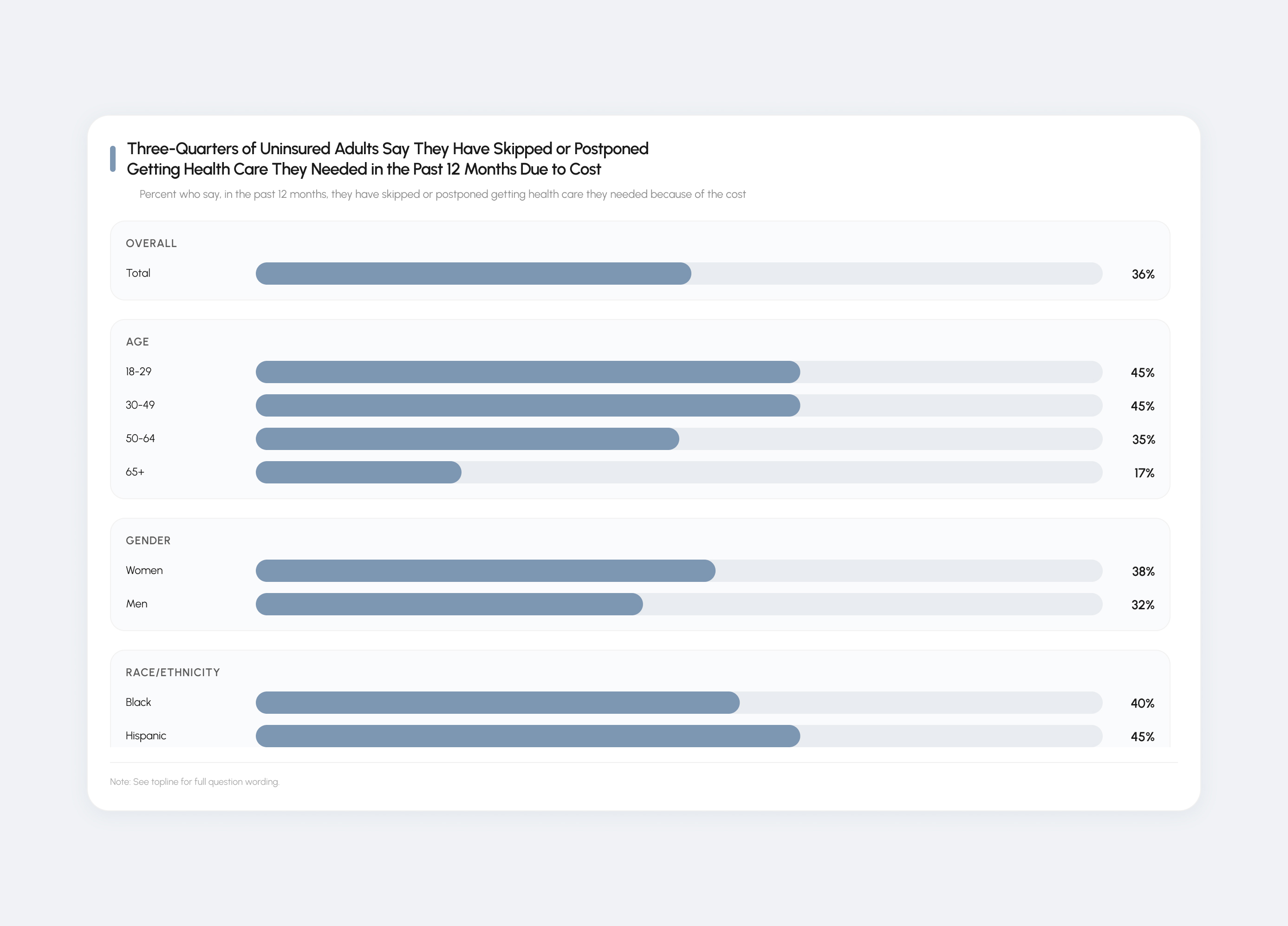

Research Insights

Understanding How Uncertainty Prevents Action

Three patterns shaped the direction:

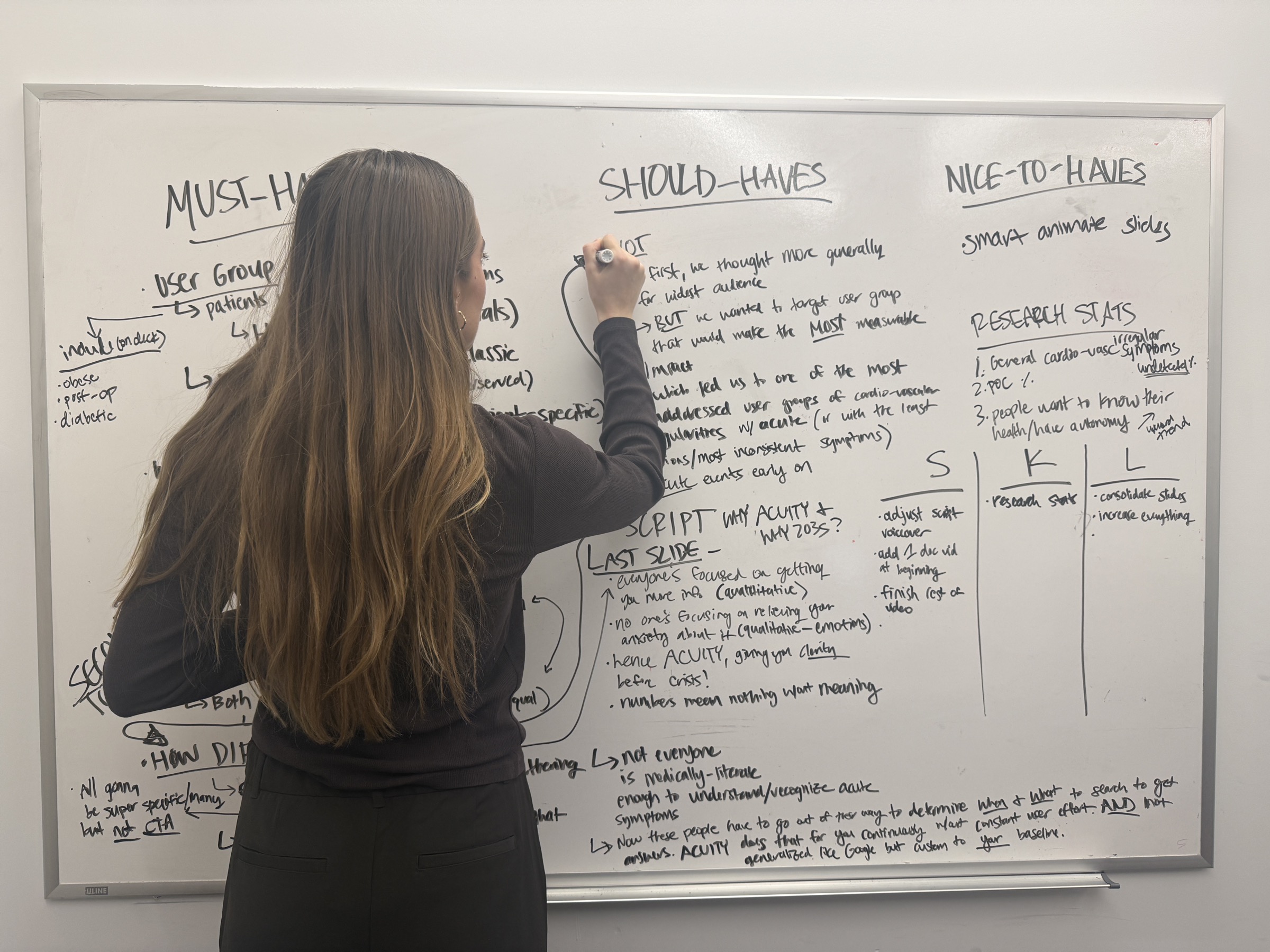

Defining the Opportunity

Making the Body's Signals Legible and Actionable Before Crisis

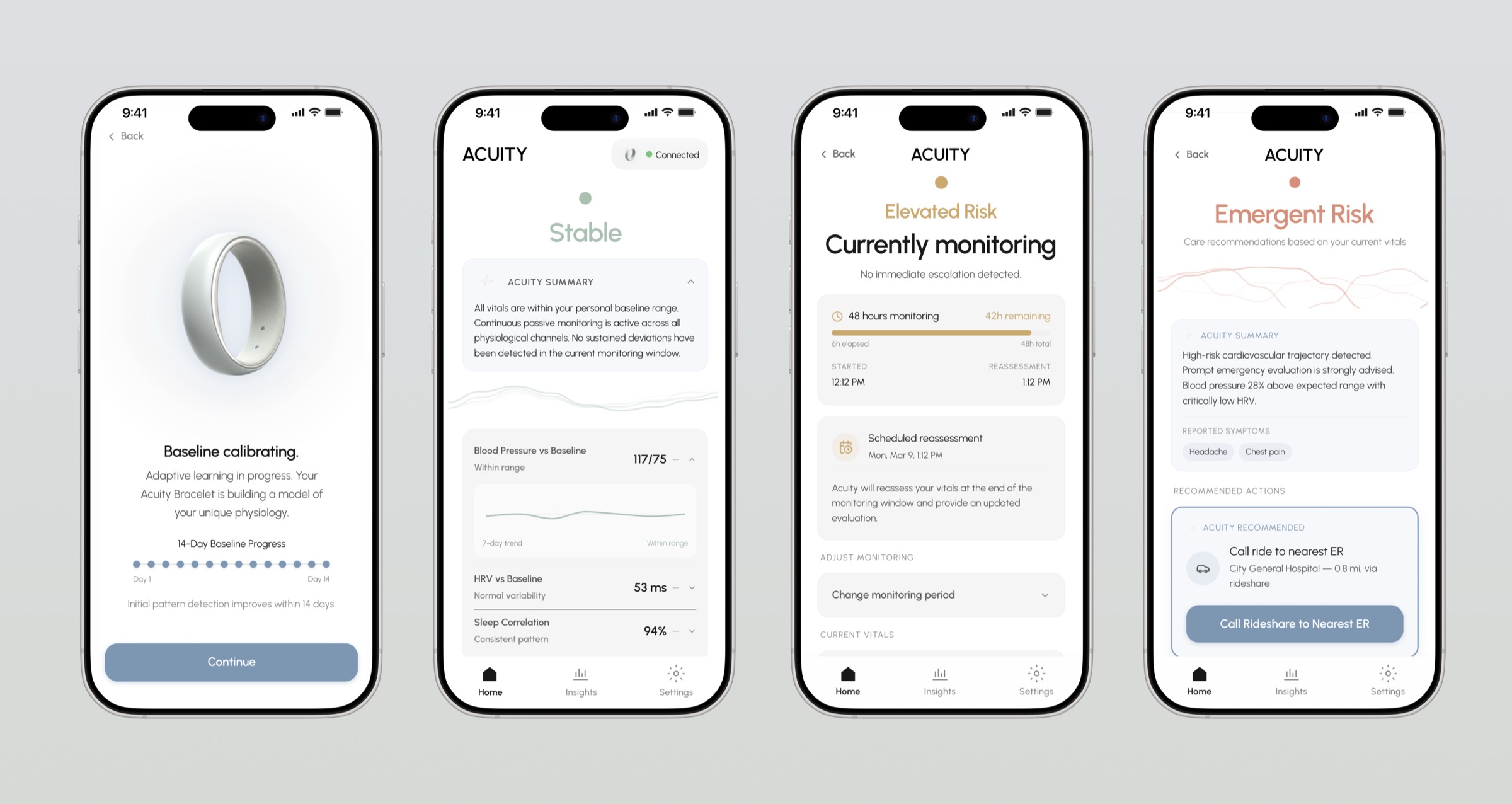

The direction shifted from broad health tracking to a focused decision-support system for the moment between symptom onset and action. Rather than expanding features, the system prioritizes interpreting personal biometric changes into clear risk states and one recommended next step.

This required removing dashboards, historical metrics, and generalized wellness insights in favor of real-time guidance in high-risk scenarios. The opportunity became a shift from passive monitoring to active intervention at the point where decisions break down.

Design Exploration

Designing a System Rather Than an Interface

The system was structured around a single output: what should the user do next. Early explorations included dashboards and multi-metric views, but these increased cognitive load and delayed decisions, so they were removed.

The team also explored expanding into service layers, including an on-demand EMT transport model, but this introduced legal and operational complexity beyond the scope of the system and was cut.

A key constraint was defining the role of AI. Rather than allowing the system to make medical decisions, it was limited to identifying risk and prompting action, ensuring users remained in control while still receiving guidance.

Test & Iterate

Designing the Core Interaction Under Constraint

Early feedback showed the system felt too broad and undefined. The product was refocused on users experiencing early cardiovascular symptoms and the specific moment of deciding whether to act.

Initial concepts emphasized detection and data. Shifting the system to center on "what to do next" made the value immediately understandable.

Relying only on sensor data created gaps. Adding user input improved context and made risk classification more credible.

Final Product

A Personal Health Decision Layer: From Signal to Action

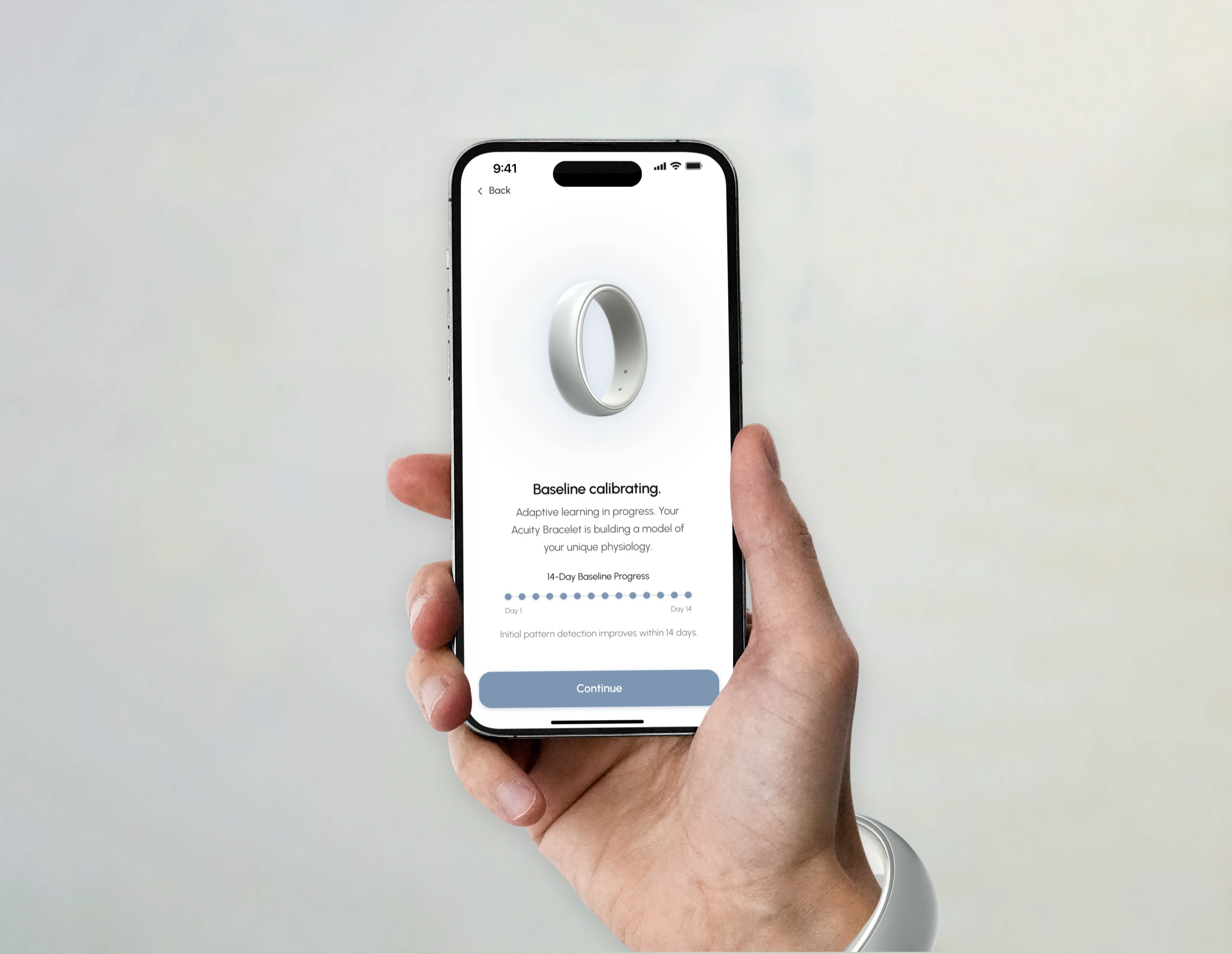

ACUITY operates as a real-time decision layer that interprets continuous biometric data against a personalized baseline. When a meaningful deviation is detected, the system classifies risk and delivers a single recommended action, removing the need for user interpretation.

Interaction is designed for moments of uncertainty, allowing users to quickly acknowledge and respond without navigating complex interfaces. The system closes the gap between sensing something is wrong and knowing what to do next.

Impact

From Individual Clarity to Systemic Change

ACUITY reframes wearables from passive tracking to decision support, addressing the gap between detection and action. During the hackathon, feedback consistently focused on the clarity of the system and its emphasis on guiding users rather than overwhelming them with data.

The concept positions a scalable model for earlier intervention, particularly for users who fall outside clear diagnostic patterns and are most likely to delay care.

Reflection

From Story to System in 24 Hours

This project shifted my focus from designing interfaces to defining decision systems. Early concepts prioritized breadth, but the strongest outcome came from narrowing the system to a single, high-stakes output: what action to take.

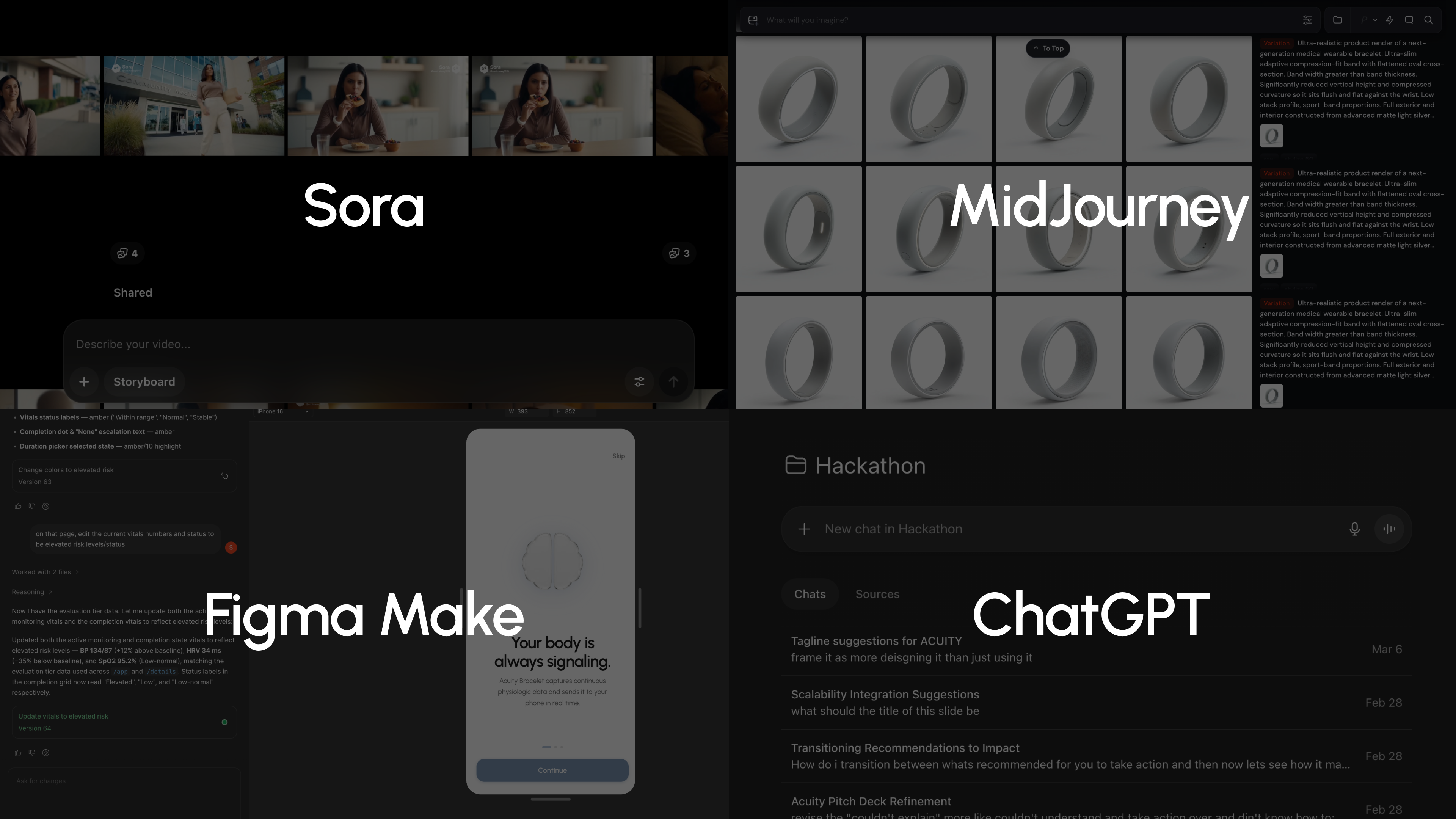

AI tools like Claude, Midjourney, and Figma Make accelerated prototyping and helped visualize the wearable and product flow, allowing the team to produce a more complete system within a compressed timeline.

I learned that speed comes from reducing scope and applying judgment, not increasing output. If extended, I would validate the risk model against real user scenarios and test failure cases where the system over- or under-reacts.

Full reflection published on Medium by Sooim Kang and Lucy Trepanier.

Presentation